AI

/ai

Artificial intelligence, machine learning, and the future of tech.

AI has a subsidization problem

TL;DR: If you hammer max plans, they're training on your code. But... is it even your code?

@biostoic · 3mo(edited)

Google just changed the future of UI/UX design...

TL;DR: ❌ AI replaces devs ✅ Devs use AI to replace all adjacent roles

@biostoic · 3mo(edited)

a file system is not all you need

TL;DR: DBs > Markdown

What The F**k

TL;DR: Human brain cells on a chip learn to play DOOM in a week. Creepy.

@biostoic · 3mo

Pen Testing AI

TL;DR: 🚨 Someone just open sourced a fully autonomous AI hacker and it's terrifying.

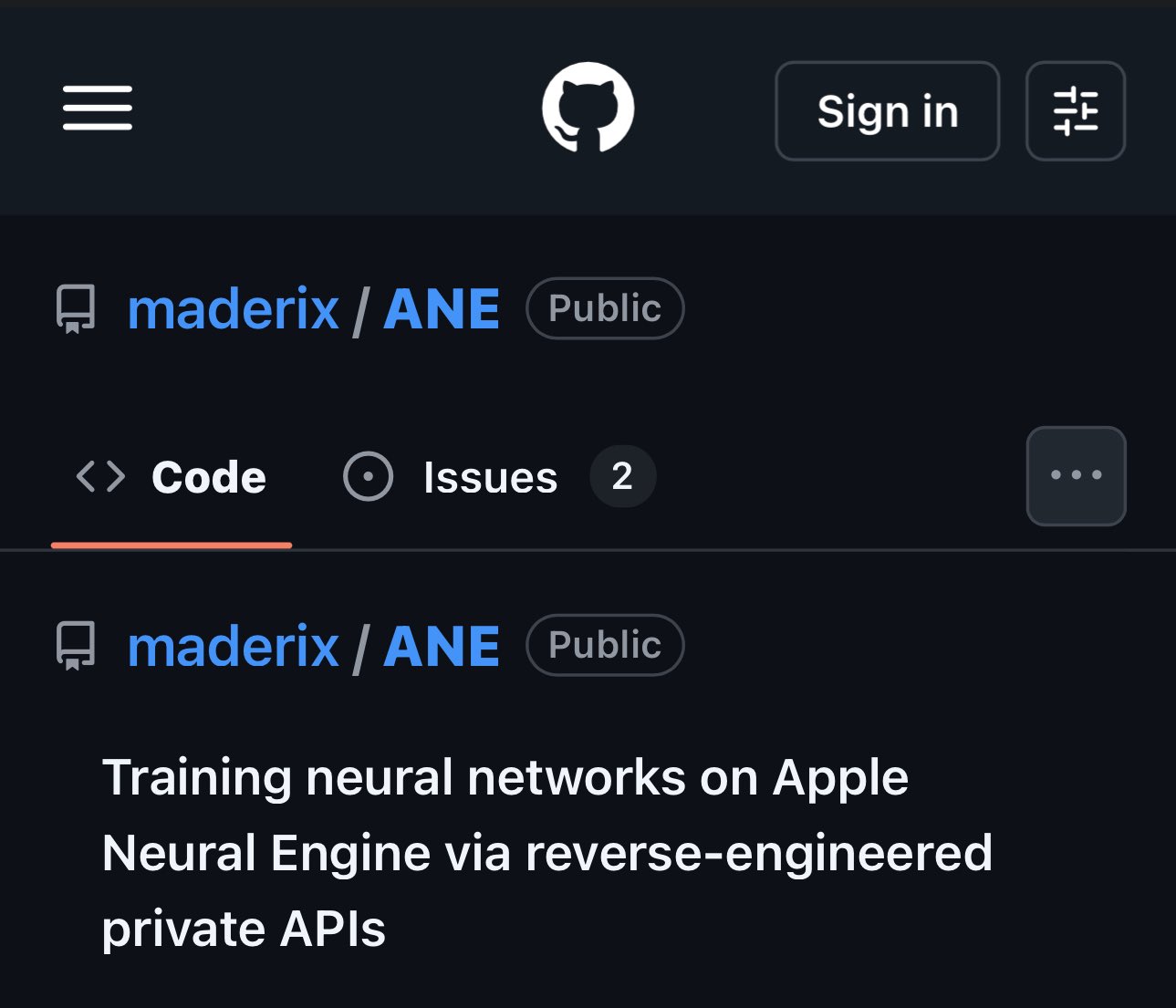

Apple’s Neural Engine Was Just Cracked

The brain runs on 20 watts and fits in your skull. The data center required to merely describe one-millionth of it would span 140 acres.

@biostoic · 3mo

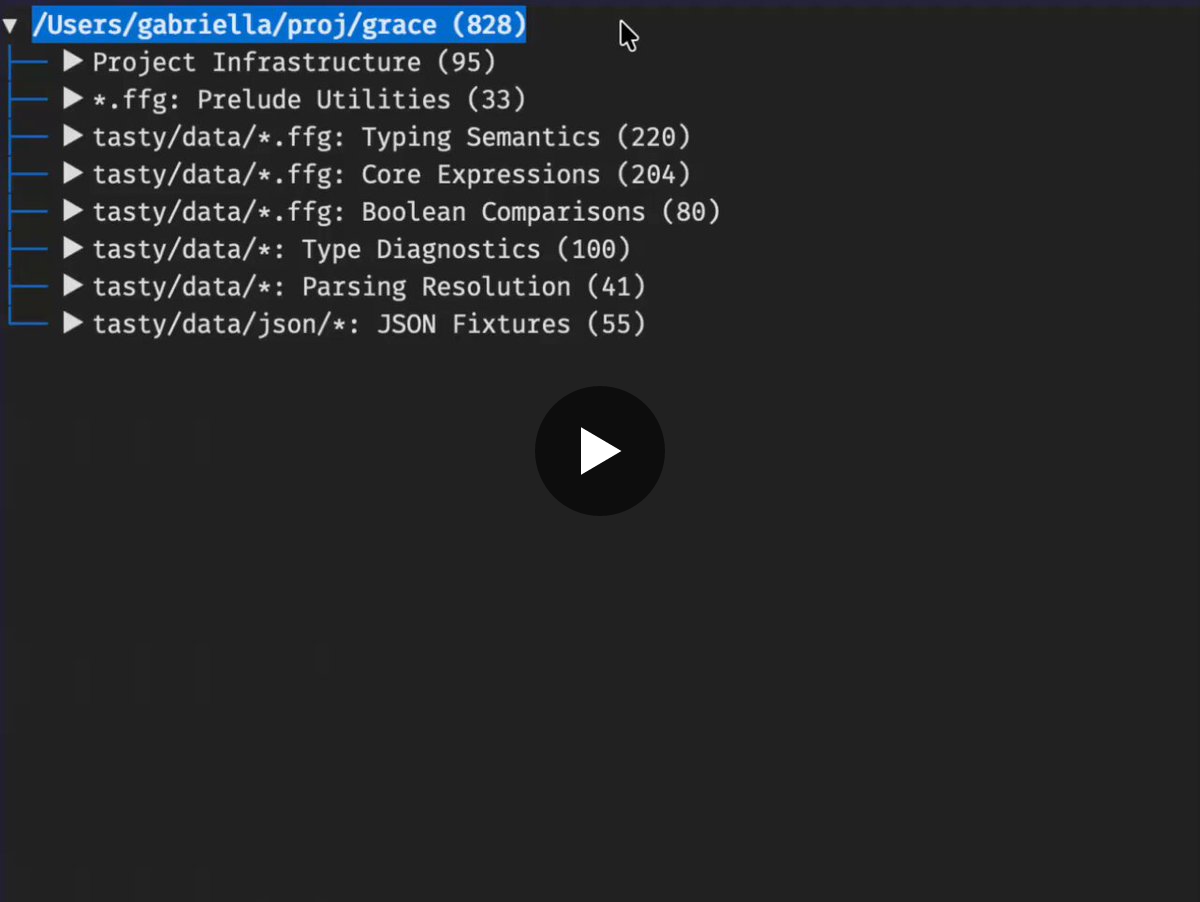

Index Code By Meaning Instead of String Match

@biostoic · 3mo(edited)

CLI Automates Apple App Store Submissions

@biostoic · 4mo(edited)

@biostoic · 4mo

The new Claude just generated the worst C compiler ever...

TL;DR: When giants stumble

OpenClaw: The Viral AI Agent that Broke the Internet - Peter Steinberger | Lex Fridman Podcast #491

TL;DR: This guy is LEGIT.

@biostoic · 4mo(edited)

AIs are Uninsurable, Risk of Multi-State Data Center Ban

TL;DR: Creative accounting blames AI for layoffs when main driver is over hiring during COVID, wallstreet punishes over hiring, rewards AI hype